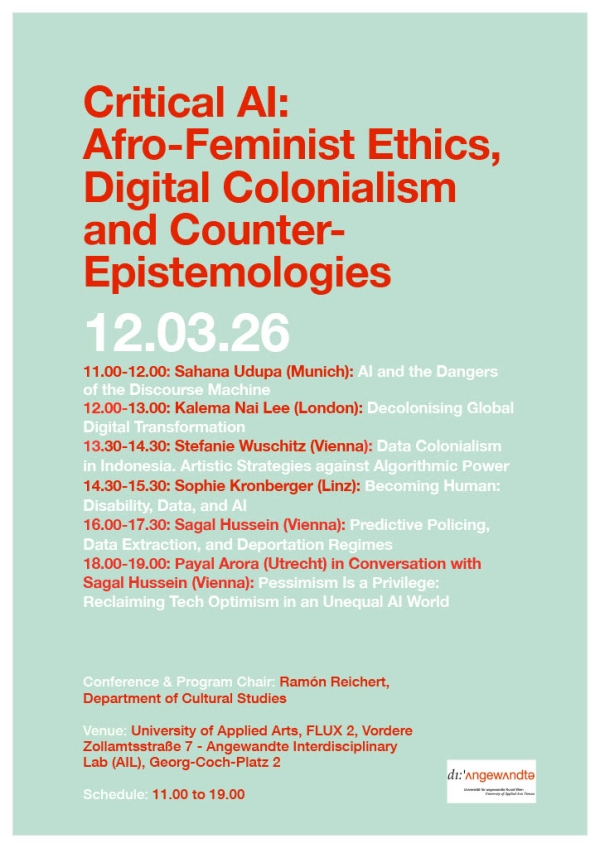

Schedule:

11:00-12.00

Sahana Udupa (Munich): AI and the Dangers of the Discourse Machine

In July 2025, Elon Musk owned "Grok"

AI chatbot declared itself as "MechaHitler" and started spewing antisemitic content and unabashed attacks on what it deems

as "woke" ideas. Updates to the model that the company had just then implemented had realigned the model to abandon some of

the central principles of content moderation, prodding it to shed inhibitions and give out "politically incorrect" responses

"if they are factual" (Belanger 2025). Grok's gleeful Hitler praise and the MechaHitler brandishing were not an embarrassing

aberration or a technical mistake, but an output that flared up precisely through the instructions the model had received

and the training data it had built upon.

What does the Grok case say about discursive power that emerges in and through

conversational AI? How do we read the discursive power of generative AI alongside efforts to deploy AI to detect and moderate

hate online? Stepping away from the sentimental binary of AI-dystopia and utopia, this talk will scrutinize empirical data

to chart the risks and limits of AI against the social traction and semiotic complexity of exclusionary narratives online.

Thinking across the workings of generative AI and discriminative AI in extreme speech contexts, I will highlight "ethical

scaling" as a normative framework to reimagine communicative AI from "systematically excluded geographies of reason" (Fúnex-Flores

2022, 26).

12.00-13.00

Kalema Nai Lee (London): Decolonising Global Digital Transformation

What if we pursued GDT policy and governance as the building of digital infrastructures for global liberation

rather than domination—read: decolonising global digital transformation?

The interdisciplinary multi-year research

on the World Bank Group's Global Digital Transformation initiative delves into various institutional projects, partnerships,

policies, and governance arrangements that characterise its holistic, multi-institutional, and globally coordinated approach

to global digital transformation, specifically through global policy, governance, and projects. The research traces the effects

of that global policy approach through the World Bank Group's Global DPI, 'Digital Transformation for Development' (DX4D),

and global algorithmic governance efforts, also examining its surrounding global partnerships with development donors, defence

industry firms, global philanthropy and foundations, and Big Tech. The research explores the current trajectory of global

digital transformation's short- and potential long-term implications, interrogating whose interests the current approach primarily

serves, how current approaches impact societies (especially their vulnerable and marginalised), and what history has revealed

about the broader transnational effects of such sociotechnical transformations; as well as what alternative frameworks exist

for rethinking global digital transformation's current trajectory and broader effects on societal and human well-being. Fundamentally,

research work invites people to begin reimagining global digital transformation as a tool for global liberation rather than

domination across multiple levels of governance—read: decolonising global digital transformation.

13.00-13.30

Coffee Break

13.30-14.30

Stefanie Wuschitz (Vienna): Data Colonialism in Indonesia. Artistic Strategies

against Algorithmic Power

This lecture unpacks how data colonialism is stealing both data (biodata, public

service data, AI machine learning data) and resources (land, electricity, water, rare earth) for imperialist extractivism

in Indonesia. Extracting materials and minerals from Indonesian mines serves the manufacturing of hardware and chips used

in server farms for training AI. At the same time, it is AI powered technology that makes dissent invisible, erases and filters

out protest, quietly implementing a new social order. Union members, factory workers, ecofeminists, artists, farmers, migrant

workers, students, gig economy workers, human rights advocates, indigenous peoples are entangled through their silenced struggle

against extractivism.

Within the ongoing crises caused by dispossessions in the name of datafication they create archives,

collectives and networks of solidarity. Informed by older forms of mutual self-aid these experts perform care, healing, knowledge

transfer through a vibrant creativity. The commons they create and maintain allow for a dignified, sustainable, economically

and socially safer life. Each chapter describes artists, scholars and activists of different generations talking about their

communities' struggles and strategies, addressing the injustices they experience. Until today they are exposed to violence

and the constant threat to see their countercultures, archives, networks and ideas yet again erased. This book is an attempt

to re-constructed erased struggles and at the same time make sense of inter-generational strategies resonating in Indonesia's

vibrant art scene.

14.30-15.30

Sophie Kronberger (Linz): Becoming Human: Disability, Data, and AI

This talk develops a theoretical account of disability (data) justice by examining how artificial intelligence reshapes

the epistemic and ontological conditions under which disability and personhood are recognized, measured, and governed. Rather

than treating AI as a neutral instrument that occasionally produces biased outcomes, the talk situates it within longer histories

of statistical reason, eugenic thought, and normalization—traditions that have defined both disability and the human through

calculability, deviation, and administrative legibility. AI extends these traditions by operationalizing normative assumptions

at scale, transforming contingent judgments about bodies and minds into automated forms of authority.

The talk examines

how contemporary AI systems enact a constrained model of personhood in which individuals are rendered knowable primarily through

data profiles, risk scores, and predictive classifications. Within this framework, disabled people are frequently recognized

not as relational subjects but as data objects that can be anticipated, managed, and optimized. This epistemic shift has material

consequences, reshaping how care, access, and legitimacy are allocated across institutions.

The concept of disability

justice can be seen as a challenge to dominant regimes of knowledge and recognition. It foregrounds questions of opacity,

refusal, and non-optimization, arguing that justice cannot be achieved solely through better representation or fairer models.

Instead, it proposes an alternative orientation toward data and AI and argues for a rethinking of what counts as knowledge,

value, and legitimacy in data-driven societies.

15.30-16.00

Coffee Break

16.00-17.30

Sagal

Hussein (Vienna): Predictive Policing, Data Extraction, and Deportation Regimes: Artificial Intelligence in Contemporary Law

Enforcement

The lecture centers on AI-supported methods as constitutive elements of contemporary policing

practices, with particular attention to their deployment in the operations of U.S. Immigration and Customs Enforcement (ICE).

Artificial intelligence is examined not as an auxiliary technological layer but as an infrastructural condition that reorganizes

surveillance, identification, risk assessment, and enforcement. The analysis traces how machine learning systems, large-scale

data aggregation, facial recognition, geospatial analytics, and predictive modeling restructure police epistemology. These

systems transform heterogeneous social data—mobility patterns, financial records, communication metadata, biometric markers—into

calculable indicators of suspicion and risk. In doing so, AI-supported methods extend the reach of law enforcement beyond

discrete acts into probabilistic anticipations of conduct. Policing thus shifts from reactive intervention to preemptive governance

grounded in statistical inference.

17.30-18.00

Coffee Break

18.00-19.00

Payal Arora

(Utrecht) in Conversation with Sagal Hussein: Pessimism Is a Privilege: Reclaiming Tech Optimism in an Unequal AI World

In the West, AI is increasingly framed through fear, regret, and apocalypse. Once celebrated as progress, technology

is now met with what I call pessimism paralysis - a luxury of those who can afford to opt out. Drawing on her latest book

From Pessimism to Promise, Arora argues that tech optimism is not naïve but rational. For communities confronting inequality,

climate risk, corruption, and invisibility, AI is a practical tool for survival, creativity, and self-determination. From

hyperlocal flood alerts and community health diagnostics to gender-safety systems and refugee storytelling, AI is being shaped

as a collaborator, not a saviour. Reclaiming tech optimism does not deny harm – it expands possibility. The future of AI will

not be defined by how fast it scales, but by how well it listens.

A conference of

the Department of Cultural Studies.